While all DAGs can run on a schedule defined in their code, you can manually trigger a DAG run at any time from the Airflow UI.

Once you unpause it, the DAG starts to run on the schedule defined in its code.

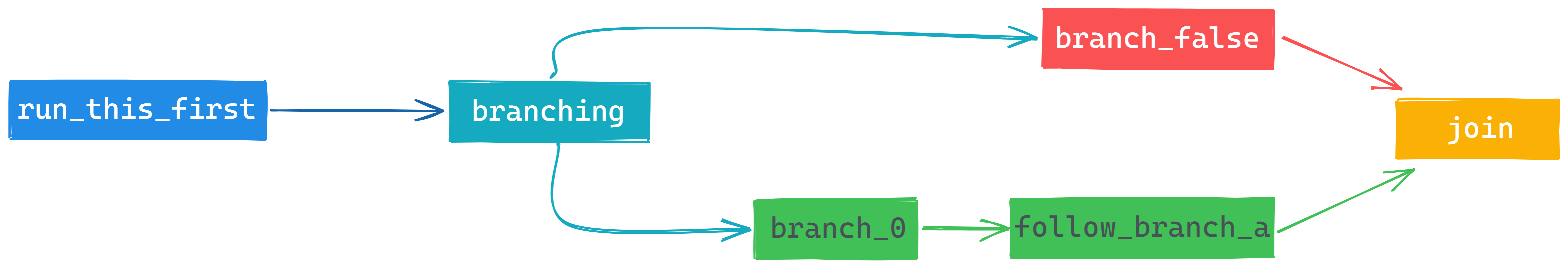

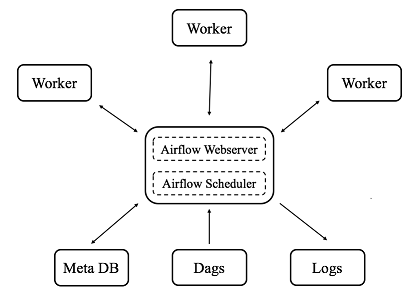

To unpause example-dag-basic, click the slider button next to its name. To provide a basic demonstration of an ETL pipeline, this DAG creates an example JSON string, calculates a value based on the string, and prints the results of the calculation to the Airflow logs.īefore you can run any DAG in Airflow, you have to unpause it. Let's trigger a run of the example-dag-basic DAG that was generated with your Astro project. Let's fix that! Step 4: Trigger a DAG run Ī DAG run is an instance of a DAG running on a specific date. Because you haven't run any DAGs yet, the Runs and Recent Tasks sections are empty. The default page in the Airflow UI is the DAGs page, which shows an overview of all DAGs in your Airflow environment:Įach DAG is listed with a few of its properties, including tags, owner, previous runs, schedule, timestamp of the last and next run, and the states of recent tasks. To access the Airflow UI, open in a browser and log in with admin for both your username and password. It contains information about your current DAG runs and is the best place to create and update Airflow connections to third-party data services. The Airflow UI is essential for managing Airflow. In your terminal, open your Astro project directory and run the following command: Now that you have an Astro project ready, the next step is to actually start Airflow on your machine. For advanced use cases, you can also configure this file with Docker-based commands to run locally at build time. The CLI generates new Astro projects with the latest version of Runtime, which is equivalent to the latest version of Airflow. Dockerfile: This is where you specify your version of Astro Runtime, which is a runtime software based on Apache Airflow that is built and maintained by Astronomer.For more information on DAGs, see Introduction to Airflow DAGs. Each Astro project includes two example DAGs: example-dag-basic and example-dag-advanced. For this tutorial, you only need to know the following files and folders: The default Astro project structure includes a collection of folders and files that you can use to run and customize Airflow. All you need to know is that Airflow runs on the compute resources of your machine, and that all necessary files for running Airflow are included in your Astro project. For this tutorial, you don't need an in-depth knowledge of Docker. When you run Airflow on your machine with the Astro CLI, Docker creates a container for each Airflow component that is required to run DAGs. Docker is a service to run software in virtualized containers within a machine. The Astro project is built to run Airflow with Docker. To run data pipelines on Astro, you first need to create an Astro project, which contains the set of files necessary to run Airflow locally.Ĭreate a new directory for your Astro project: A local installation of Python 3 to improve your Python developer experience. An integrated development environment (IDE) for Python development, such as VSCode.This is pre-installed on most operating systems. A terminal that accepts bash commands.To get the most out of this tutorial, make sure you have an understanding of: This tutorial takes approximately 1 hour to complete. Run a local Airflow environment using the Astro CLI.This tutorial is for people who are new to Apache Airflow and want to run it locally with open source tools.Īfter you complete this tutorial, you'll be able to: Getting started with Apache Airflow locally is easy with the Astro CLI. Get started with Apache Airflow, Part 1: Write and run your first DAG

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed